Performance reviews that engineers respect—and managers can defend.

Move beyond subjective ratings and recency bias. Evaluate engineers and product teams on actual impact: cross-team collaboration, technical influence, and scope of problems solved—backed by data from the tools your team already uses.

Performance Reviews for Technology Companies

Your engineers know when a performance review is fake. The data problem is why.

A senior engineer mentors six junior devs, drives three cross-team initiatives, and ships the hardest project of the quarter. Their manager—who joined six months ago—rates them "meets expectations" because the last sprint had a late deployment.

That's recency bias. It's also the default mode of performance reviews that rely on manager memory.

The damage is real: Engineers who feel the process is rigged stop trusting management. High performers start looking at offers from companies where evaluations feel fair. Calibration sessions become political. Promotions go to the most visible, not the most impactful.

Tech companies have higher expectations for analytical rigor than any other industry. Your engineers apply that standard to their own evaluations. When your performance process can't meet it, your best people notice first.

Confirm brings the same data discipline to performance reviews that engineers bring to systems design: behavioral evidence, cross-team signals, and calibration that doesn't depend on a single manager's subjective memory.

Engineering reviews tied to leveling guides, not manager intuition

Generic performance review software has no concept of Staff Engineer vs. Senior Engineer. Confirm structures reviews around your leveling guides and competency frameworks—so engineers understand exactly what they're being evaluated on, and managers have consistent criteria to calibrate against. The result: fewer calibration arguments, clearer promotion decisions, and engineers who trust the process.

Role and level-specific templates

IC and manager tracks. Different templates for L3 vs. Staff. Each template reflects the actual expectations at that level in your organization.

Leveling criteria built into reviews

Embed your leveling guide directly into the review form. Managers evaluate against explicit criteria, not vague impressions.

Calibration that respects the rubric

When all managers use the same criteria, calibration is about applying it consistently—not rewriting it in the session room.

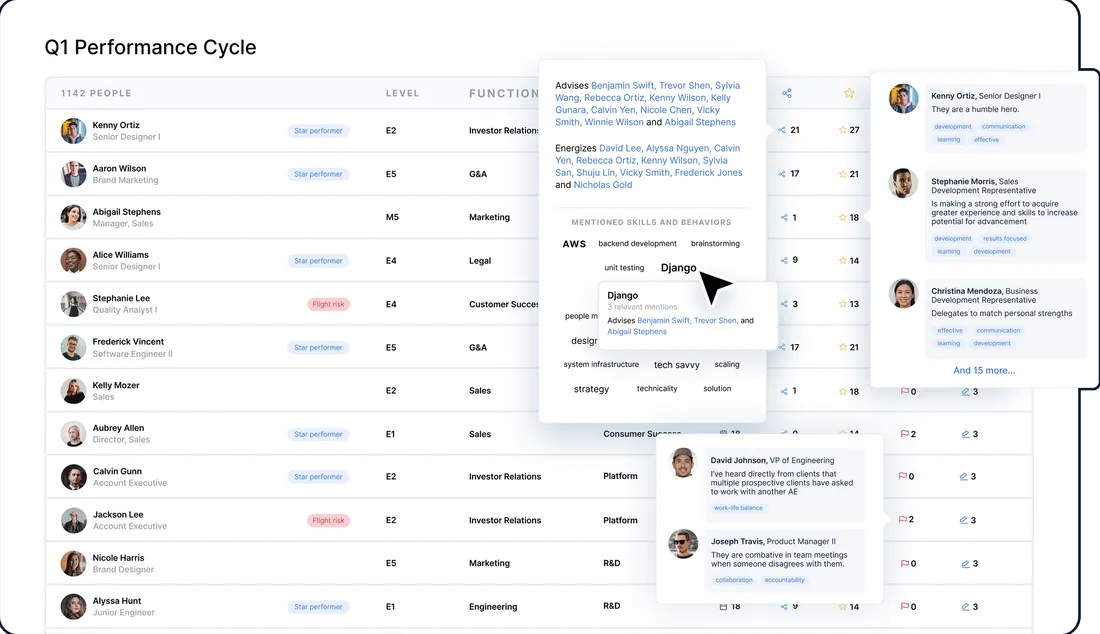

Capture cross-team impact that managers can't observe directly

The most impactful engineers often do their most valuable work outside their manager's direct view—unblocking other teams, driving architectural decisions, mentoring engineers in other orgs. Confirm's organizational network analysis captures these collaboration patterns from Slack, GitHub, and Jira. Senior engineers get credit for influence that would otherwise be invisible to their manager.

Who consults whom for technical expertise

ONA shows which engineers other teams rely on for technical guidance—a strong signal of scope and impact that managers rarely see.

Cross-functional influence mapped

Engineers who drive initiatives across teams leave collaboration fingerprints in the data. Confirm surfaces them.

Mentorship contribution quantified

Junior engineers grow fastest around specific seniors. Track who's actually developing the next generation of engineers.

What goes into a technology performance review

Confirm evaluates engineers and product managers across seven dimensions grounded in how top tech companies think about performance:

Quality of technical decisions, scope of problems solved, and architectural influence on the codebase.

Reliability, scope management, autonomy in getting things done, and consistent delivery against commitments.

How other teams depend on this engineer, unblocking behaviors, and contribution to cross-functional initiatives.

Technical writing, design doc quality, RFC authorship, and ability to align teams around technical direction.

Investment in junior engineers, code review quality, and contribution to team knowledge and capability.

Proactive identification of problems, judgment about tradeoffs, and end-to-end ownership of outcomes.

Working at the right level, amplifying the team's output, and operating beyond individual contributor scope.

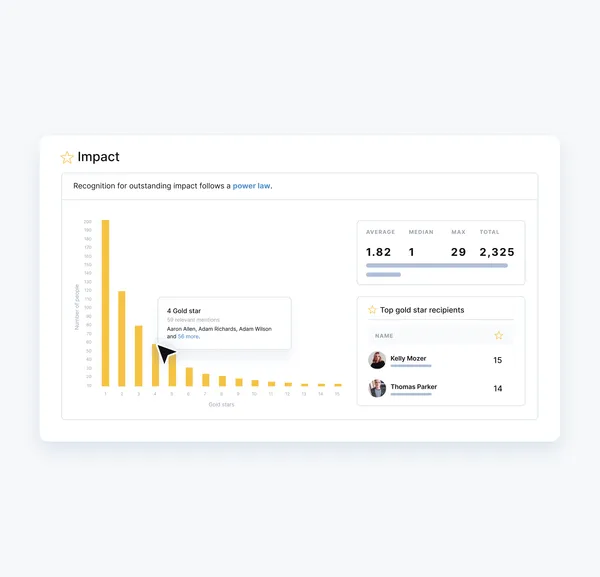

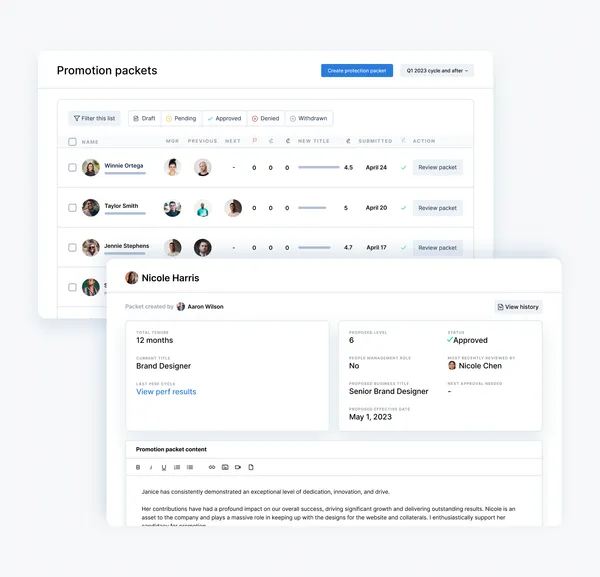

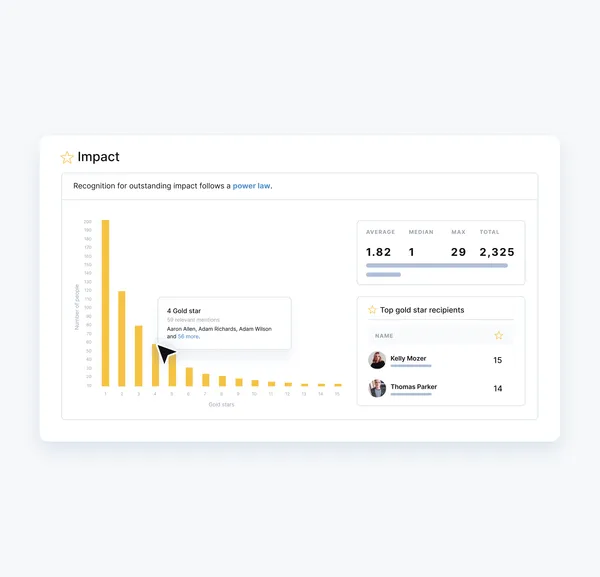

Calibration that produces consistent promotions—not political outcomes

Calibration sessions in tech often devolve into the loudest manager winning. Confirm surfaces rating inconsistencies before the session starts so the conversation is grounded in data. See which managers inflate or deflate ratings, compare distributions across teams, and arrive at calibration with AI-recommended adjustments instead of blank slides.

Pre-calibration data packages

Every manager sees the same comparative data before the session. No surprises, no ambushes.

Promotion readiness signals

ONA data shows who's operating at the next level before a manager nominates them. Data-backed promotion recommendations.

Bias detection

Identify where remote engineers, underrepresented groups, or quieter contributors are systematically rated lower relative to behavioral evidence.

Explore the whole platform

Performance Reviews

Modern performance management powered by ONA and AI.

Explore this feature ›Engagement Surveys

Turn employee feedback into action, faster.

Explore this feature ›OKRs and Goals

Track OKRs and career goals in one place.

Explore this feature ›Talent Management

Make better talent decisions, without the bias.

Explore this feature ›Feedback and Growth

Empower continuous feedback and development.

Explore this feature ›See Confirm in action

See why forward-thinking enterprises use Confirm to make fairer, faster talent decisions and build high-performing teams.