Editor's Note: This article was originally published on David Murray's Substack. See how Confirm handles performance management.

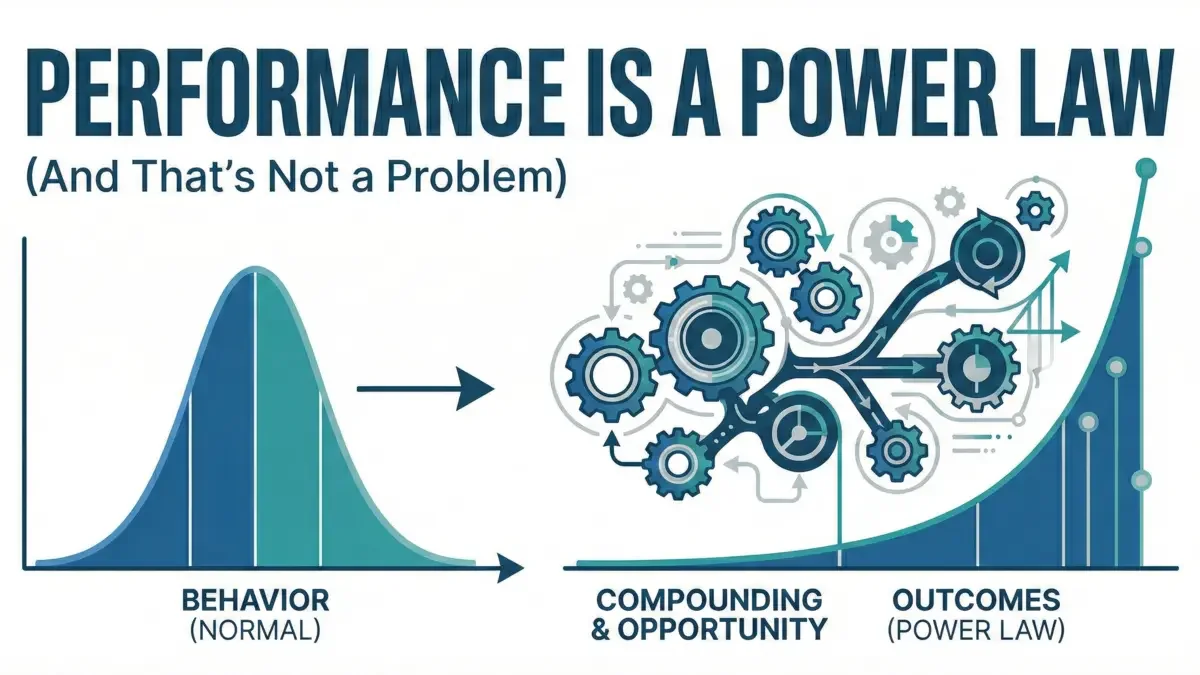

I read a recent piece arguing that performance isn't a power law, that what looks like extreme differences in output are really just normally distributed behavior plus uneven opportunity. It's a thoughtful argument. The baseball analogy is sharp. The Campbell references are solid.

But I think it's mistaken. Or maybe more precisely: it's answering a cleaner question than the one businesses actually face.

The Behavior vs. Output Split Breaks Down

The central move in the essay is to separate "performance" (behavior under someone's control) from "outputs" (the results of that behavior). In baseball, that distinction makes sense. You can look at swing mechanics separately from home runs. You can evaluate plate discipline apart from batting average.

In knowledge work, that line is blurry at best.

Take a product manager choosing what to build next. Is that behavior or output? The act of choosing is the work. And the only way to evaluate whether it was a good choice is to look at what happened. There isn't some clean behavioral metric floating free from consequences.

Same with an engineer deciding on system architecture. Or a salesperson walking away from a bad deal. Or a CEO placing a strategic bet. In each case, the "behavior" only has meaning because of the result. Judgment isn't separable from outcomes; it's expressed through them.

When performance gets defined as "behavior under an individual's control," it fits repeatable, tightly scoped work. It doesn't map as cleanly onto complex, creative, or strategic roles, where what we actually care about is whether someone's decisions create value.

Compounding Isn't a Distortion

The article concedes that small differences can compound over time, but treats that compounding almost like statistical contamination, something to adjust for so we can see the "true" distribution underneath.

That framing misses something important. Compounding is the mechanism.

A developer who makes slightly better design decisions earns trust. That trust leads to more consequential projects. Those projects accelerate learning. Stronger peers want to collaborate. The network improves. Opportunities expand. The gap widens.

Not because the initial difference was enormous, but because advantages stack.

This is the Matthew Effect, what Robert Merton documented in science, where "eminent scientists get disproportionately great credit for their contributions while relatively unknown scientists tend to get disproportionately little credit for comparable contributions." Better-known researchers attract more resources, better collaborators, and more opportunities, creating a self-reinforcing cycle of advantage.

Calling this something to "control away" is like trying to understand investing while adjusting out compound interest. Yes, it makes the math tidier. It also removes the thing that generates the returns.

Getting more at-bats isn't random noise. Staying healthy, being selected, earning trust, those are part of performance. So is being given bigger problems because you've proven you can handle them. If you strip out opportunity entirely, you're not isolating performance. You're removing one of the ways performance expresses itself.

The Baseball Example Doesn't Settle It

The essay shows that home runs follow a power law, but when you normalize for plate appearances and look at OPS, the distribution looks closer to normal. The implication is that underlying capability is normally distributed, and the fat tails come from exposure.

But even in that normalized world, the difference between average and elite is enormous. A player three standard deviations above the mean isn't just "a bit better." In practical terms, they're transformative.

And importantly, the ability to sustain high performance over time, staying on the field, continuing to be selected, isn't separable from capability. Durability, consistency, adaptability: those are skills too.

Clean statistical isolation is useful for analysis. It doesn't automatically tell us what matters most in real organizations.

Maybe Complex Work Really Does Have Fat Tails

The O'Boyle and Aguinis research gets dismissed because it focuses on outputs: publications, citations, elections won, box office revenue. But in many domains, output is the performance.

A researcher whose work has no impact isn't high-performing in any meaningful sense. A politician who never wins doesn't accumulate influence. An entertainer no one watches doesn't shape culture.

Their analysis of 633,000+ individuals across 198 samples found that 94% of performance datasets did not conform to normal distributions, they followed power law patterns. And this wasn't limited to simple tasks. The effect was strongest in complex, high-impact domains: research productivity, entertainment, elite athletics, and politics.

It's possible the normal distribution assumption simply doesn't hold for complex, high-variance work.

Many of these roles are multiplicative. You don't just need one strength. You need several, judgment, communication, timing, credibility, execution. The ability-motivation-opportunity (AMO) framework in organizational research suggests these components don't simply add together; they interact. If performance depends on multiple factors working together, small differences across each dimension can multiply into very large differences in results.

If performance has five components, and you need to be good at all of them:

- Average performer: 0.8 × 0.8 × 0.8 × 0.8 × 0.8 = 0.33

- High performer: 1.2 × 1.2 × 1.2 × 1.2 × 1.2 = 2.49

That's a 7.5x difference from just 1.5x differences in each component.

That kind of system won't naturally produce neat bell curves. It will skew.

Superstar Economics and Preferential Attachment

Sherwin Rosen's work on "superstar economics" helps explain why small differences in talent can yield enormous differences in rewards. In markets where technology allows one performer to serve many customers (a concert can be recorded, a software engineer's code can scale), relative advantage matters more than absolute difference. The best captures disproportionate share.

Similarly, network science has shown how preferential attachment, where "the rich get richer", naturally generates power law distributions. In career networks, project allocation, and reputation accumulation, those with early advantages attract more opportunities, which generate more advantages. This isn't corruption or market failure. It's emergent structure.

What Companies Actually Care About

There's also a practical angle here.

If one leader creates ten times the impact of another, through revenue, strategy, or team performance, does it matter whether their "underlying behavioral capability" is two times better or ten times better?

Companies pay for value created. They don't hire "inputs." They hire people to change outcomes.

Focusing on behavior because it's "controllable" assumes a few things: that we know which behaviors drive results, that they're teachable, and that development reliably outweighs selection. In some roles, that's true. In others, especially senior or highly creative roles, it's less obvious. Judgment isn't easily decomposed into coachable micro-behaviors. Often, you recognize it only after seeing its effects.

The original 10x programmer research, Sackman, Erikson, and Grant's 1968 study, found performance ratios of 10:1 among experienced programmers on the same tasks. Subsequent research has replicated similar variance in software engineering contexts. Whether you attribute that to "talent" or "multiplicative skill interaction" or "compounded learning advantage," the output difference is real and material.

On Compensation

Where the original article is strongest is on compensation. Extreme pay dispersion can be destabilizing. It can hurt morale, collaboration, and trust.

But that's a separate question.

You can believe that performance outcomes are heavily skewed and still design compensation systems that moderate inequality for the sake of team health. Those two positions don't conflict.

The question isn't "Is performance power-law distributed?" It's "What compensation structure produces the best organizational outcomes?" That's a normative question, not a purely statistical one.

Implications for Performance Management

If performance in many roles has fat tails, what follows?

You don't abandon development. But you also don't pretend that everyone's impact will cluster neatly around the mean.

You hire for baseline capability. Then you deliberately create conditions where compounding can happen: meaningful projects, rapid feedback, strong collaborators, visible trust.

And you measure outcomes. Not because behaviors don't matter, but because in complex work, the quality of thinking shows up in consequences.

The goal isn't to force the distribution into a bell curve. It's to help more people plug into the mechanisms that drive outsized impact.

Both selection and development matter. The power law view doesn't mean "only hire superstars and ignore everyone else." It means understanding that small differences in capability, given the right conditions and opportunities, can compound into large differences in impact, and designing systems accordingly.

The Framing That Matters

The original essay ends with the line: "The performance definition we choose will become the performance we create."

That's right. But here's another way to put it:

If we define performance narrowly as observable effort, we'll optimize for activity and compliance.

If we define performance as the interaction of capability, judgment, opportunity, and compounding, we'll optimize for leverage and impact, even if it's messier and less evenly distributed.

The second view is harder to measure. It's less tidy. It may feel less fair at a glance.

It's also closer to how value actually gets created.

Performance looks like a power law not because people are inherently unequal, but because the systems we operate in amplify small differences. Complex work is multiplicative. Opportunities stack. Trust compounds.

That's not a statistical illusion. It's how organizations work.

The Failure of Traditional Performance Measurement

If the power law view is closer to reality, it raises an uncomfortable question: how well do traditional performance systems actually measure performance?

Not well, it turns out.

The most common approach, forcing performance ratings into a bell curve, assumes the very distribution the evidence contradicts. When you require that performance ratings follow a normal distribution (say, 10% top performers, 70% meets expectations, 20% needs improvement), you're not measuring reality. You're enforcing a statistical fiction.

But even when companies abandon forced rankings, manager ratings remain deeply flawed. Research by Scullen, Mount, and Goff analyzing 4,392 managers found that 62% of the variance in performance ratings was attributable to idiosyncratic rater effects, the manager's own biases, rating tendencies, and personality, not the actual performance of the person being rated.

In other words, your rating tells us more about your manager than about you.

The bell curve assumes a stable underlying construct, clean measurement, and independent observations. Manager ratings rarely satisfy any of those conditions. If 60% of the signal is rater bias, then forcing performance into a bell curve isn't just statistically questionable, it's operationally misleading. You're normalizing bias.

This creates a perverse outcome: the people who need the most development often aren't the ones identified by traditional systems.

When we analyze organizational network data alongside traditional performance ratings, a pattern emerges. When you look beyond manager ratings, at patterns of peer-recognized value creation, 80-90% of meaningful contribution signals come from people who aren't the obvious, highly visible stars.

Why? Because 360s and manager ratings tend to select for visibility, extraversion, and network centrality, not impact.

The same network analysis consistently reveals another group: quiet, high-impact contributors who appear as power law performers when you measure actual work patterns and outcomes. They may be introverted. They may not be tightly networked in the social sense. But their contributions show up clearly in collaboration patterns, knowledge transfer, and problem-solving.

These aren't power law performers because of how they're networked. They're power law performers because of the impact they make. The network data simply makes visible what manager ratings miss.

This matters because if performance genuinely follows a power law in knowledge work, and our measurement systems are 60% noise, we're systematically misallocating development resources, misjudging talent, and losing the people who matter most.

If our measurement system is biased and noisy, and the evidence suggests it is, then the bell curve isn't just descriptively wrong. It's structurally unjust.

What This Means for Confirm

If we take this seriously, our job isn't just to label performance. It's to understand how small capability differences turn into large outcome differences, and make that process visible.

That means spotting emerging talent before the gaps are obvious. Making sure high-potential people get meaningful opportunities. Surfacing patterns in decision-making and collaboration that correlate with impact. Helping managers see where compounding is happening, and where it's blocked.

It also means building systems that measure actual impact, not manager perception. Systems that surface the quiet contributors traditional reviews miss. Systems that help organizations understand how performance creates value, not merely who performed.

We're not merely measuring who won. We're trying to understand how winning happens, so organizations can create more of it.

That's a harder product to build. It's also a more honest one.

See How Confirm Measures Real Impact

If performance follows a power law, the way you identify and retain your highest contributors matters enormously. See the Talent Density Playbook for a framework on measuring and improving your ratio of exceptional performers. Traditional performance reviews miss 80-90% of your quiet, high-impact contributors. Confirm's organizational network analysis reveals the hidden performers driving your business, before your competitors hire them away.

Request a Demo to see how we help companies move beyond manager bias and build fairer, more effective performance systems.

References

Campbell, J. P. (1990). Modeling the performance prediction problem in industrial and organizational psychology. In M. D. Dunnette & L. M. Hough (Eds.), Handbook of industrial and organizational psychology (Vol. 1, 2nd ed., pp. 687–732). Consulting Psychologists Press.

Merton, R. K. (1968). The Matthew effect in science. Science, 159(3810), 56-63.

O'Boyle, E., & Aguinis, H. (2012). The best and the rest: Revisiting the norm of normality of individual performance. Personnel Psychology, 65(1), 79-119.

Aguinis, H., Bradley, K. J., & Brodersen, A. (2014). Industrial-organizational psychologists in business schools: Brain drain or eye opener? Industrial and Organizational Psychology, 7(3), 284-303.

Crawford, G. C., Aguinis, H., Lichtenstein, B., Davidsson, P., & McKelvey, B. (2015). Power law distributions in entrepreneurship: Implications for theory and research. Journal of Business Venturing, 30(5), 696-713.

Blumberg, M., & Pringle, C. D. (1982). The missing opportunity in organizational research: Some implications for a theory of work performance. Academy of Management Review, 7(4), 560-569.

Bos-Nehles, A., Renkema, M., & Janssen, M. (2023). Examining the Ability, Motivation and Opportunity (AMO) framework in HRM research: Conceptualization, measurement and interactions. International Journal of Management Reviews, 25(2), 725-748.

Rosen, S. (1981). The economics of superstars. American Economic Review, 71(5), 845-858.

Barabási, A.-L., & Albert, R. (1999). Emergence of scaling in random networks. Science, 286(5439), 509-512.

Sackman, H., Erikson, W. J., & Grant, E. E. (1968). Exploratory experimental studies comparing online and offline programming performance. Communications of the ACM, 11(1), 3-11.

Scullen, S. E., Mount, M. K., & Goff, M. (2000). Understanding the latent structure of job performance ratings. Journal of Applied Psychology, 85(6), 956-970.

Power Law Performance: Practical Implications for Managers

Understanding that performance follows a power law changes how you should run your team. Here are the three most important practical implications:

1. Talent Density Beats Headcount

Adding average performers rarely changes output meaningfully. Adding one top performer to a team can shift results more than hiring three average performers. Netflix's talent density philosophy is built on this insight.

2. Retention Focus Should Be Concentrated

If 20% of your employees drive 80% of outcomes, losing even one or two high performers creates disproportionate damage. Standard retention programs that treat all employees equally underinvest where it matters most.

3. Performance Measurement Must Capture Real Contribution

Traditional rating systems often compress performance distributions — everyone ends up "3 out of 5." This makes it impossible to see the power law. Organizational network analysis reveals actual contribution patterns that ratings miss.

If you're looking for calibration software to standardize ratings across your organization, see how Confirm approaches it.