Most performance reviews are theater. Managers ask vague questions, employees give careful answers, and the company strategy sits untouched in a slide deck nobody opens. The review ends. Nothing changes.

The problem isn't the review itself. It's that reviews happen in a vacuum, disconnected from what the company is actually trying to accomplish. When a software engineer's performance is rated without any reference to the product roadmap, or a sales rep's review ignores the go-to-market pivot from last quarter, the whole process becomes performance for its own sake.

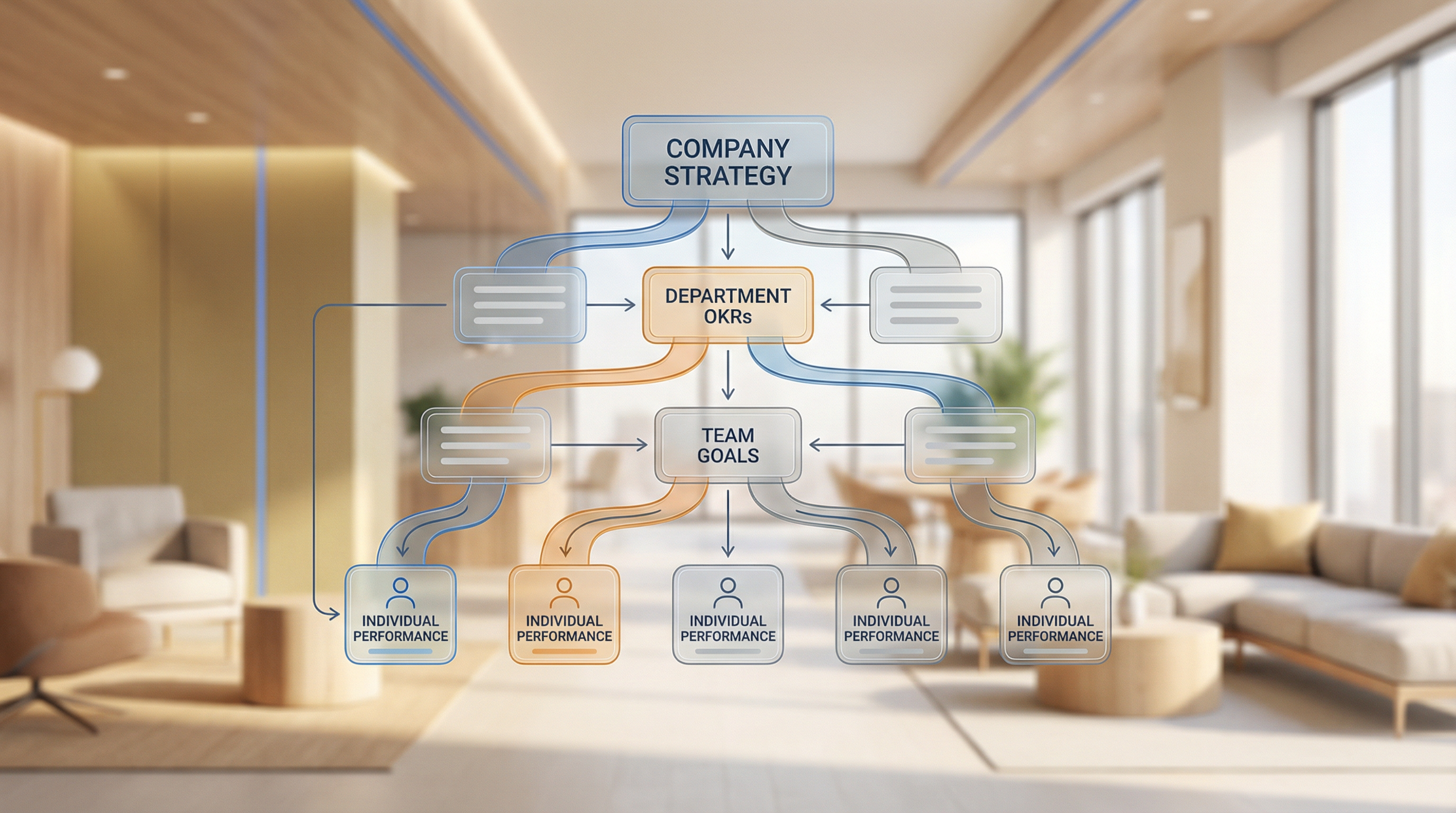

OKR cascading fixes this. Not by adding more bureaucracy, but by creating a direct line from company strategy down to individual contribution, and then using performance reviews to assess how people moved along that line.

Here's how to do it.

What OKR cascading actually means (and what it doesn't)

OKR stands for Objectives and Key Results. Cascading means the company's top-level objectives flow downward into team objectives, then into individual objectives. Each level supports the level above it.

The mistake most companies make: they treat cascading as a copy-paste exercise. The company has an objective to "grow annual recurring revenue by 40%." So the marketing team writes down "grow annual recurring revenue by 40%" as their objective too. That's not cascading. That's repetition.

Real cascading means translation. If the company wants 40% ARR growth, marketing's cascaded objective might be "generate 2,000 qualified opportunities from enterprise accounts." That's specific to what marketing can actually control, and it directly feeds the company number.

The HR team would cascade differently: "Reduce time-to-hire for revenue-critical roles from 60 days to 35 days." Also company strategy-adjacent, but specific to what HR controls.

The alignment test: Each team's OKRs should answer the question: "If we hit our objectives, does it make the company objective more likely to happen?" If yes, the cascade is working. If not, the team is optimizing for something disconnected from strategy.

Why performance reviews fail without this foundation

Standard performance review questions ("Did you meet your goals? What would you improve? How are you developing professionally?") aren't inherently bad. They're just incomplete.

Without OKR alignment, reviews end up measuring the wrong things:

| What reviews measure without OKRs | What it misses |

|---|---|

| Activity, not impact. "Sent 300 outreach emails" sounds impressive. | If none went to enterprise accounts and that's the strategy, the activity was misdirected. A review measuring only activity scores this as a win. |

| Individual performance, not team performance. One person hits their number. | If they did it by hoarding leads or skipping knowledge-sharing, the team got worse. OKR cascading makes team-level objectives visible so you can evaluate both dimensions. |

| Last-quarter performance, not strategy execution. Reviews default to what happened most recently. | OKR cascading anchors the conversation to a longer arc: did this person contribute to where the company is trying to go? |

The cascading framework for performance review integration

The framework has three layers, and each layer feeds the review differently.

Layer 1: Company OKRs (set annually, reviewed quarterly)

These come from leadership. They're the strategic bets the company believes it must accomplish to succeed in the next 12 months. Examples:

- Increase net revenue retention to 115%

- Launch in two new enterprise verticals

- Reach product-market fit in the SMB segment

Each company objective has 3-5 key results: measurable outcomes that indicate the objective was achieved.

How this feeds reviews: Company OKRs set the context. At the start of any performance conversation, a manager should be able to say: "Here's what the company was working toward this year. Here's where we actually landed." Everything downstream connects to this.

Layer 2: Team OKRs (set quarterly, translated from company OKRs)

Each team (product, sales, marketing, engineering, HR) takes the company OKRs and translates them into team-level objectives. The translation question: "What does our team specifically need to deliver to make the company OKR more likely?"

This is where most organizations fall short. Teams often set objectives based on what they were doing anyway, then claim it connects to strategy. The discipline is to start from the company objective and work backwards.

How this feeds reviews: Team OKRs are the shared context for an entire team's performance reviews. A manager should review team OKR progress before any individual review. It tells you whether the team was pulling in the right direction, which matters for interpreting individual contributions.

Layer 3: Individual OKRs (set quarterly, translated from team OKRs)

The individual level is where cascading gets personal. Each person's objectives should clearly connect to their team's objectives. The translation question: "What does this person specifically need to do to help the team hit its objectives?"

For a senior software engineer on a product team:

- Team OKR: Reduce time-to-value for new customers from 30 days to 14 days

- Individual OKR: Ship onboarding flow redesign by end of Q2, reducing steps from 12 to 7

The individual OKR is specific, time-bound, and clearly feeds the team objective.

How this feeds reviews: Individual OKRs become the primary structure for performance reviews. Managers walk through the person's objectives: which were hit, which weren't, and why. The conversation stays grounded in something real.

Running the performance review conversation with OKRs

A quarterly performance conversation with OKR-aligned reviews has four parts.

- Context (5 minutes). Start by reviewing company and team OKR progress. The manager shares where the company stood going into the quarter, where team OKRs landed, and any major strategy shifts. This sets the stage so the individual review doesn't happen in isolation.

- OKR review (20 minutes). Walk through each of the person's objectives from the quarter. For each: what was the objective and key results? What actually happened? What was in their control versus not? What did they learn? Fact-based first, judgment second. Don't immediately grade success or failure; understand what happened and why before evaluating.

- Contribution to team and company objectives (10 minutes). Zoom out. Beyond the individual's own OKRs, how did they contribute to the team's progress? Did they help colleagues hit their objectives? Were they a bottleneck anywhere? Individual OKRs don't capture everything. This section surfaces contributions that wouldn't appear in a simple scorecard.

- Forward-looking alignment (10 minutes). Set up the next quarter. Review the company and team OKRs for the coming period. Work together to translate those into the person's individual OKRs for next quarter. The review ends by creating the alignment that makes the next review more meaningful.

Common failure modes

Cascading as a reporting exercise. If OKRs are just something people fill out to satisfy HR, they won't drive behavior. The cascade has to be tied to real decisions: resource allocation, hiring, project prioritization. If managers don't make decisions based on OKRs, employees won't take them seriously.

Too many objectives. The power of OKRs is focus. If a person has seven objectives in a quarter, they have no priority. Three objectives is the right ceiling. If a manager wants to add a fourth, they need to remove one.

OKRs set without context. Individual OKRs that are set in a one-on-one without reference to team objectives aren't cascades. They're just goal-setting. The manager needs the team OKRs visible during individual OKR-setting conversations.

Grading that kills honesty. If hitting 100% of OKRs is what gets someone a good review, they'll set easy objectives. If missing an OKR is automatically negative, they'll game the results. OKRs should be ambitious: 70% completion is often a good quarter. Reviews should reward quality of thinking and effort, not just the final number.

Lagging indicator focus. Revenue, NPS, retention are outcomes of other work. If a person's OKRs are all lagging indicators, reviews become a discussion of things they couldn't directly control. The best OKRs mix leading and lagging: the activities and outputs that should drive the outcomes.

What good looks like: a full cascade example

Here's a complete cascade for a company with ARR growth as a top-level objective.

Key results: Close 40 new enterprise accounts (ACV > $150K) / Expand net revenue retention from 105% to 115% / Launch two new product verticals with >10 paying customers each

| Level | Objective | Sample Key Results |

|---|---|---|

| Company | Grow ARR from $12M to $18M | 40 new enterprise logos / NRR 115% / 2 new verticals |

| Sales Team | Generate and close enough enterprise pipeline to hit 40 new logos | 200 qualified enterprise opps / Deal cycle <90 days / 35% win rate on RFPs |

| Account Executive | Build and close enterprise pipeline that supports team quota attainment | 25 enterprise opps qualified from outbound / 10 past legal review / 6 new enterprise closes |

The performance review conversation: "You qualified 22 of 25 targeted opportunities. You progressed 8 past legal, not 10. You closed 5 enterprise logos versus the 6 target. Company came in at 34 new logos against a 40 target, so this mattered."

The conversation is grounded. No vague impressions. No debate about whether the person worked hard or had a good attitude. The OKRs tell the story; the review explores why.

Making it work in practice

OKR cascading for performance reviews isn't a one-quarter project. The first time you run it will be messy. OKRs will be poorly written. Some will be unmeasurable. Some managers will resist the structure.

A few things that help:

Write OKRs collaboratively. If managers hand down OKRs without input, employees don't own them. Give people a voice in setting their objectives, within the constraints of what the team needs.

Review OKRs monthly, not just at review time. Monthly check-ins on OKR progress surface problems before they become review surprises. A manager who discovers in November that an employee's Q3 OKR was never tracked is too late to do anything about it.

Tie calibration to OKR data. When managers calibrate performance ratings across teams, OKR progress should be the primary input. If a manager claims an employee deserves a high rating but their OKRs were missed, that needs an explanation. The data creates accountability.

Separate OKRs from job description. There are things people are expected to do that wouldn't appear in OKRs: showing up reliably, communicating well, handling the routine work of their role. OKRs measure progress on strategic priorities. Baseline expectations need to be managed separately, or you create a system where people optimize for OKRs at the expense of everything else.

The payoff for HR and the business

When performance reviews connect to OKRs, a few things shift:

Managers have an easier time giving honest feedback. It's harder to deflect when the conversation is anchored in measurable objectives the person set themselves.

Employees understand why they're being evaluated the way they are. They can see the line from their work to the company's priorities. That clarity matters for retention: people stay where they feel their work has clear purpose.

Leadership gets performance data that means something. Instead of aggregate ratings disconnected from strategy execution, they can see which teams are hitting OKRs and which aren't, and use that to inform decisions about investment, restructuring, and development.

Compensation and promotion decisions get easier to defend. When someone's performance rating reflects documented OKR progress, there's less room for bias and politics to drive outcomes.

Performance reviews that exist in isolation from strategy aren't performance management. They're paperwork. OKR cascading is what transforms them into something worth doing.

FAQ

How do OKRs differ from traditional performance goals?

Traditional performance goals are often set annually and focused on personal development or activity metrics. OKRs are set quarterly, tied explicitly to company strategy, and measured by outcomes rather than activities. The cascading structure is also distinctive: in most goal-setting systems, individual goals don't have a clear connection to company priorities.

What if the company's strategy changes mid-year?

OKRs can and should be revised when strategy shifts. A company that holds employees to objectives set before a major market pivot is measuring irrelevant things. The quarterly cadence of OKR review is partly designed to accommodate this; teams can reset objectives each quarter to reflect current strategy.

How do you handle employees whose work doesn't map cleanly to company OKRs?

Some roles (legal, finance, certain operations functions) support the business without directly driving revenue or growth metrics. The cascading approach still works, but the team OKRs might cover efficiency, risk reduction, or capacity. "Reduce contract review cycle from 14 days to 7 days" is a legitimate team OKR for a legal team, even though it doesn't directly map to ARR growth.

How many OKRs should one person have?

Three is the standard recommendation. Two is fine for complex roles. More than four is too many: it dilutes focus. Each objective should have 2-4 key results.

Can OKRs replace annual performance reviews?

Some companies have moved to quarterly OKR-based reviews and eliminated the annual cycle entirely. This works when the culture supports honest quarterly conversations and when managers are trained to do them well. For companies new to OKRs, running quarterly OKR check-ins alongside the existing annual review (using OKRs to inform it) is a less disruptive starting point.